From May 18th through the 20th, four members of Compass’ leadership team (myself included) ventured to the SuperACAC conference in Reno. We attended a number of educational sessions, including those organized by key administrators at the College Board and ACT. To provide counselors and families with a succinct overview of the latest admission testing updates – especially in light of the sweeping changes to the SAT – I have written summaries and commentary for three notable sessions.

From May 18th through the 20th, four members of Compass’ leadership team (myself included) ventured to the SuperACAC conference in Reno. We attended a number of educational sessions, including those organized by key administrators at the College Board and ACT. To provide counselors and families with a succinct overview of the latest admission testing updates – especially in light of the sweeping changes to the SAT – I have written summaries and commentary for three notable sessions.

ACT Innovation and Insight: Why More Students are Taking the ACT

Don Pitchford, ACT | James Marviglia, Cal Poly SLO

This session proved to be highly controversial. My colleague Adam Ingersoll wrote an assessment that was shared online and backed by many other attendees whose comments included “absolutely awful,” “borderline unethical,” “just not right, and “caused a first-year counselor to panic.” Here is Adam’s take:

You don’t expect statements and advice from a senior official at a testing agency to be as factually and ethically shaky as what we were treated to in this session. Some highlights (lowlights) follow. I’m paraphrasing, but not very loosely. He said:

- Take the ACT as many times as possible. Colleges see you sending them that 4th score and they’re impressed. Send just one score and they’ll see you as a “stealth applicant.”

- Did you know that we (ACT) show colleges where you ranked them on your four free score reports? Better be thinking about that!

- So we have this new product called Aspire. Schools love it! Rollout problems? Well, some schools couldn’t figure out the “onboarding.” Yes, we know it’s very difficult logistically for you to administer it by computer. Yes, we know most of your students will take the ACT on paper, not on a computer. But we charge you more for paper-based testing because computer testing is cheaper for us.

- Why do so many more students choose the ACT over the “other test?” Because it’s easier! (Unclear whether colleges are also supposed to believe the ACT is ‘easier’ and find this virtuous.)

- Counselors: you’re used to advising students to try both the SAT and ACT, right? Well you don’t have to anymore! (Because, wink-wink, the revised SAT will be just like the ACT anyway.)

Where to even start? Let’s mostly ignore #’s 3 (delusional), 4 (silly), and 5 (specious).

Number 1 is patently outrageous. Never mind that the presenter offered his 8th grader’s experience as evidence of the merits of this approach. Most off-putting was his clear insinuation that colleges will be impressed by this serial test-taking behavior. He also seemed to be under the impression that his suggestion jibes with mainstream advice given by high school counselors. A counselor in the audience who works primarily with under-represented students was quick to correct him. I wish she had asked him why then doesn’t the ACT give out unlimited fee waivers instead of just two?

Number 2 was spectacularly tone-deaf. The speaker calling attention to this unfortunate policy of ACT’s was bad enough. But to offer it up to a room full of counselors as a clever tactic which their advisees should employ was shocking. In other words, a presenter at our association’s annual conference proudly announces that his company offers a way for colleges to subvert the intent of the Statement of Principles of Good Practice II.B.2 related to student expectations of privacy regarding their preferences of where they hope to attend.

The presentation was strewn with statements like the above that were nebulous and disconcerting. Was #2 just meant to be a general FYI for all? A tip directed at colleges seeking yield optimization strategies? A confession that students who don’t know about this may be disadvantaged? A cry for help for ACT to find the resolve to do better on this front than the FAFSA and Common App?

Ultimately and perhaps unfortunately, College Board and ACT are vendors at these conferences every bit as much as the for-profits littering the exhibit hall. You will see accountable-to-no-one for-profit vendors give far more informative and balanced presentations this week. Given their size and relevance, the two non-profit gorillas should be held to at least as high a standard.

Adam and other attendees in this session were asked by more than 100 counselors to share their concerns with NACAC and ACT leadership, and they will be doing so.

Advising the Class of 2017 around Standardized Test Changes

Rachel Mead, Ryan Kiick, Katie Noone, The Princeton Review

The presenters, high-level administrators at the Princeton Review (TPR), advised attendees on how to help students navigate the debut of the redesigned SAT and determine which exam to prepare for – current SAT (cSAT), ACT, or redesigned SAT (rSAT). I will briefly paraphrase TPR’s advising while responding with Compass’ perspective when appropriate:

Much like the ACT, rSAT items will more closely align with reading, language, and math concepts taught in school. The ‘aptitude-based’ or ‘reasoning’ style of questioning will be replaced by an ‘achievement-based’ style of questioning that students will be more familiar with.

We are in agreement here.

Because Reading and Language passages may be pulled from cryptic ‘Founding Historical Documents,’ it is likely that ESL students may have more trouble on the rSAT than the cSAT or the ACT.

Based on our analysis of items on the released rPSAT, it seems that the original emphasis on Founding Historical Documents may have been slightly exaggerated in the rSAT specs. The effect of including historical documents in reading passages – especially as it relates to performance among ESL and minority students – has yet to be seen.

Students who are planning to take the final administration of the cSAT in January should plan to register far in advance. We may see a surge in popularity that could quickly book testing sites.

This is excellent advice.

TPR is now offering a new hybrid test called the ‘ACT-SAT StartUp’ that will help students determine their preference for ACT or rSAT. The StartUp will include a sampling of questions from both tests and, upon completion, will include a score report that tallies raw score and provides explanations for all questions.

For starters, Compass frowns upon tests constructed by third parties. Third party exams tend to lack predictive validity in comparison to exams released by the test-manufacturers (i.e. the College Board and ACT, Inc.). Although questions from third party exams look legitimate at first glance, they do not undergo the same sophisticated screening as official test items. Consequently, third party test items may inadvertently be easier or more complex than actual test items.

Secondly, hybrid tests (seductively) oversimplify the decision-making process between admission tests. To develop a well-reasoned preference for SAT or ACT, a student needs to experience these exams in their entirety. Also, even though most students’ scores tend to correlate on both SAT and ACT, there are exceptional cases when practice test scores significantly diverge from one another. Stated frankly, meaningful differences in test performance cannot be divined by an abbreviated ‘testing sampler.’

Before the presentation ended, a counselor asked TPR reps if most rising juniors (class of 2017) would achieve a ‘peak performance’ by the cSAT’s final administration in January of 2016. One of the reps explained that the majority of test-takers will have been exposed to all of the relevant cSAT content/coursework by the end of 10th grade. Consequently, it is reasonable to presume that students can adequately prepare for the cSAT and achieve their personal bests by the winter of junior year.

In our own practice, upon examining the official test scores of thousands of students, we see the greatest score increases among students who have more time to mature, improve their academic skills, and develop a comprehensive preparation plan. Typically, our students achieve peak test scores by spring of 11th grade or the fall of 12th grade – often taking 2 or 3 official tests on record before obtaining the desired result. Apart from students who already show a penchant for admission tests (i.e. excellent cPSAT scores), the cSAT’s stunted testing timeline makes it unreasonable for test-takers interested in comprehensive, sophisticated preparation.

College Board and Khan Academy: World-Class, Free Tools for Students

Alicia Ortega & Tierney Kraft, The College Board | Nikki Danos, Forest Ridge School

This session was devoted to exploring the partnership between the College Board and Khan Academy. Two College Board reps described the roll-out of Khan’s online test preparation interface, while a high school counselor, Nikki Danos, explained how the interface was being implemented at her school (Danos’ school was chosen as a pilot site for Khan’s resources). Here is a synthesis of significant information from the session:

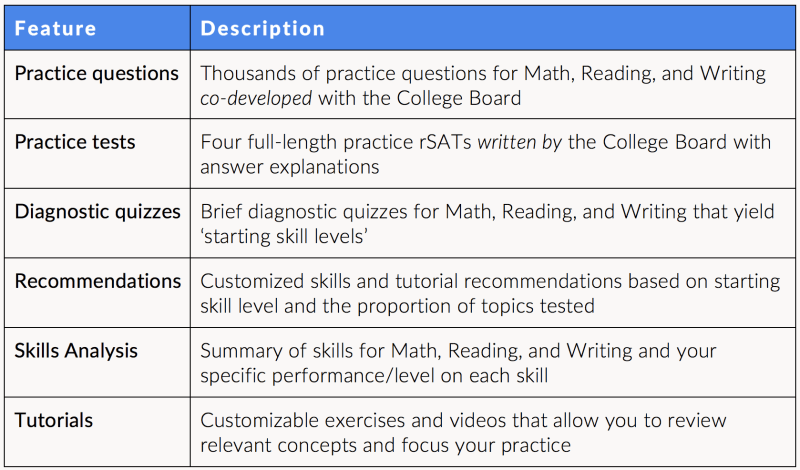

The College Board reps clarified the timeline for the release of rSAT preparation materials via Khan. By the first week of June (2015), the Khan rSAT interface will go live with the following:

Based on the aforementioned features of the Khan interface, there are a few items that require unpacking:

First, the co-developed practice questions used in online quizzes and tutorials likely do not have the same validity as questions on the practice rSATs written by the College Board. Performance on co-developed practice questions may not equate to similar performance on the College Board’s practice tests.

Second, the four full-length practice tests that can be taken online via Khan will also be downloadable/printable for paper-and-pencil completion. Practice tests may also be purchased in the form of a printed book published by the College Board – much like the existing Official SAT Study Guide (OSSG). Regardless of how students take these practice tests, estimated scaled scoring – on the ‘returning’ 1600-point scale – will not be available until later in the year (exact date TBD). Prior to scaled scoring, students can only obtain a raw score – a total count of correct and incorrect questions.

Third, during a discussion of the online full-length practice tests, Nikki Danos chimed in and said that her students were unable to impulsively jump between multiple tests. The practice tests were sequentially ‘unlocked’ as students completed them in their entirety. This is a smart feature, because it helps maintain the diagnostic integrity of the tests. What remains unclear is whether or not the locking/unlocking feature will allow students to download/print the four exams all-at-once.

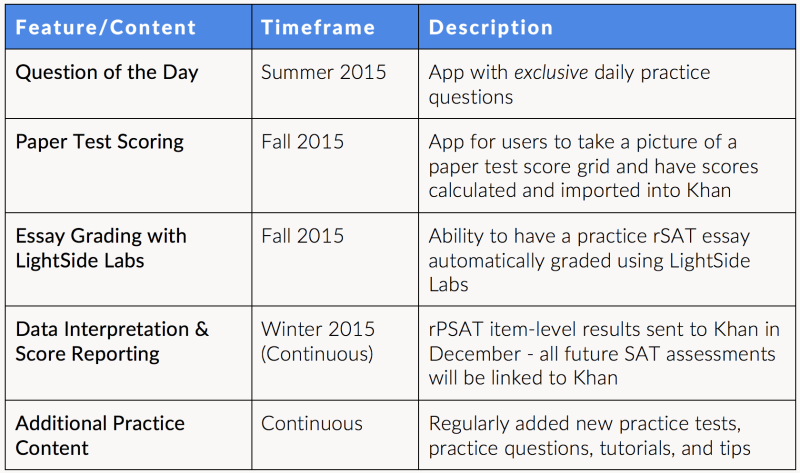

The College Board reps also reviewed additional apps and features of the Khan interface that will be available in the near future. See the table below:

While showcasing these forthcoming apps, the College Board reps made a couple more claims:

Students can obtain a customized test prep plan via Khan by either 1) completing online diagnostic quizzes, or, 2) linking their College Board account with official test results (starting with the rPSAT) to the Khan interface. We assume that practice test results (once submitted to Khan) will also shape the automated preparation plan, but this was not confirmed during the session.

College Board reps were quick to say that all student information captured on the Khan site will not be shared with other parties. It is entirely secure.

On a final note, regarding the continued release of full-length practice tests, it is unclear when additional exams will ‘drop’ beyond the initial four in June. During College Board’s regional summit in February, reps announced the publishing of four rSAT practice tests and the staggered release of four additional practice tests in the fall of 2015 (co-developed by College Board and Khan). The additional four co-developed practice tests were not mentioned during the SuperACAC session, which may suggest that full-length practice tests are still enveloped in the development phase.

Should you have more specific questions about the material covered in this post, please write a comment or email me directly at matt@compassprep.com. We realize that this information can be overwhelming for those not regularly steeped in the world of admission testing, and we hope to continue disentangling the ambiguities around these exams. Let us know how we can help!

Thanks for recapping these sessions for us. It’s one thing to go and learn first hand, but it’s so valuable to hear thoughts/feedback/critiques from test experts. Keep up the good work!

Thank you for the great info! I can an always count on Compass giving good, honest advice when it comes to test prep!

Since I wasn’t able to go to the SuperAcac this year, I doubly appreciate this article. So much useful information and clarity! Thank you!